You trained a model. It works beautifully in your notebook, 94% accuracy, loss curves flattening right on schedule. You're proud of it.

Then someone asks: "Can we deploy this to production by Friday?"

That question has humbled more ML engineers than any benchmark ever will. Because building models and running them reliably at scale are two entirely different disciplines. And in 2026, the gap between those two worlds is both wider and better understood than it's ever been.

Here's the honest truth about the current state of machine learning: the fundamentals haven't changed. Backprop still works. Gradient descent still works. Your statistics textbook is still relevant. What has changed — significantly — is the landscape of techniques, architectural choices, and production practices available to practitioners. This guide covers what actually matters if you're building real systems.

The Shift in What "ML Work" Looks Like

A few years ago, most ML practitioners spent the majority of their time on data pipelines, feature engineering, and classical model selection. That picture has changed.

The rise of powerful pre-trained foundation models has moved the center of gravity. For many production applications, the core ML work is no longer "design and train a model from scratch" — it's "adapt an existing capable model to your domain, evaluate it rigorously, and deploy it reliably." That's a different skill set, with different leverage points.

This doesn't mean fundamentals are irrelevant. Far from it. Understanding gradient descent, regularization, the bias-variance tradeoff, and probability theory is what separates engineers who can debug a failing model from engineers who can only copy-paste code and hope for the best. But the applied workflow has shifted, and knowing where to invest your time has shifted with it.

Architectures: What's Actually Happening

Transformers Remain Dominant, But the Monoculture Is Cracking

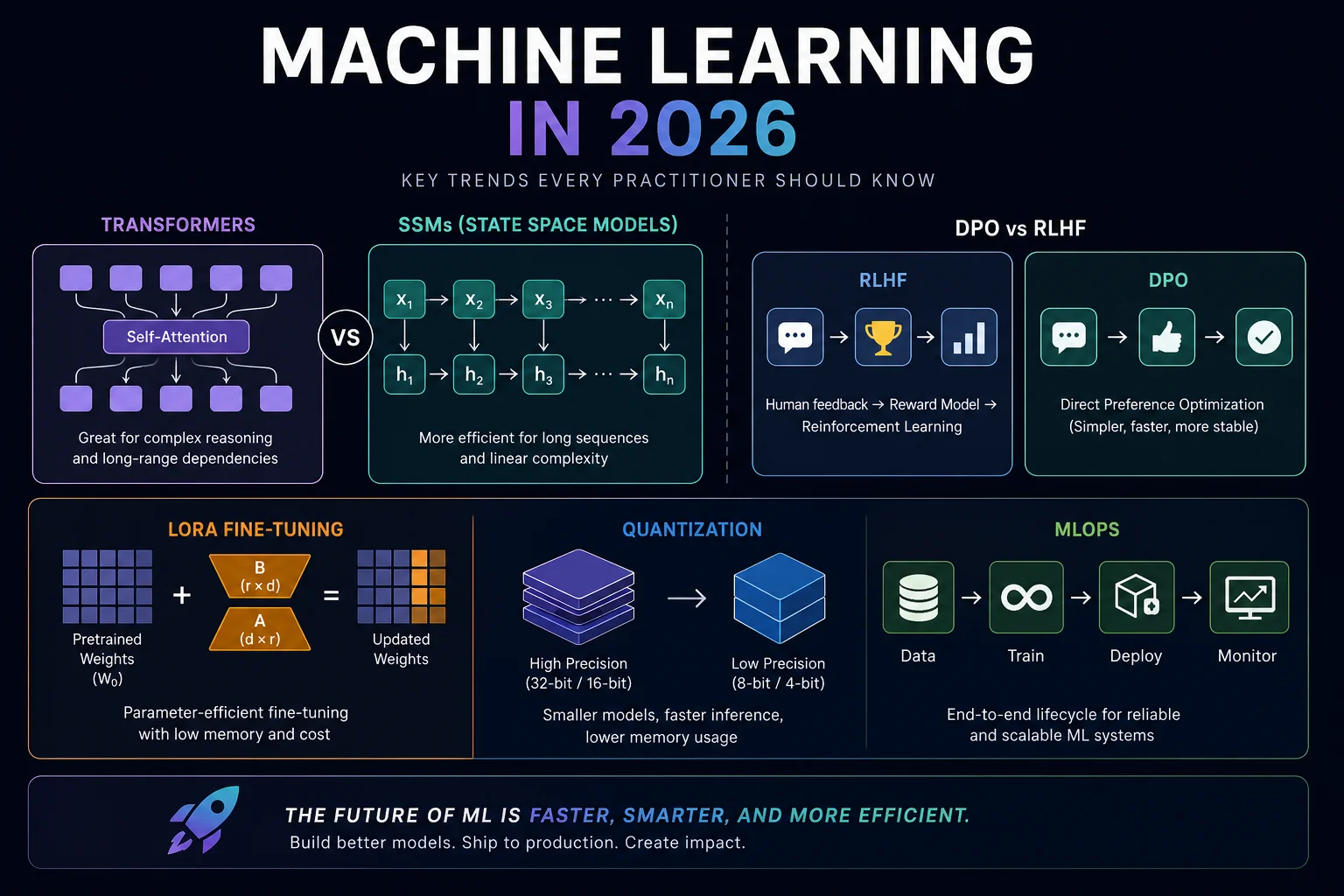

The transformer architecture, introduced in the 2017 "Attention Is All You Need" paper, remains the backbone of virtually every serious model in production. Self-attention is powerful: it lets every token attend to every other token, capturing long-range dependencies in ways that earlier architectures couldn't. The limitation is quadratic scaling with sequence length — long-context tasks get computationally expensive fast.

That limitation has driven serious research into alternatives. Several are worth understanding as a practitioner:

State Space Models (SSMs) particularly the Mamba family of architectures — handle sequences in linear time rather than quadratic. They use selective state spaces that learn to remember what matters and discard what doesn't. On long-sequence tasks, they can match or outperform transformers at a fraction of the compute. The honest tradeoff: they're weaker on tasks requiring attention to specific arbitrary positions from earlier in the sequence which is exactly what transformers are built for.

Hybrid architectures are where some of the most productive current research is happening. The core idea: use attention surgically for the parts of a task that need it, and cheaper recurrent or SSM mechanisms for everything else. Several production models now use hybrid designs. The field has moved from "transformers forever" to "transformers where appropriate."

Retentive Networks and linear attention variants reformulate attention in ways that allow parallel training (like transformers) but recurrent inference (like RNNs), avoiding the growing KV-cache memory bottleneck that makes long-context transformer inference expensive. If you're deploying models with very long contexts and inference cost is a concern, these architectural choices matter.

The practical upshot: you don't need to implement these from scratch tomorrow. But understanding why the field is exploring sub-quadratic alternatives gives you real context for architecture decisions and helps you read the research literature intelligently rather than cargo-culting whatever architecture is most hyped.

Mixture of Experts Has Become Standard

Mixture of Experts (MoE) is now mainstream in frontier model design and worth understanding even if you're not building frontier models. The concept: instead of running every token through every parameter in the network, you route each token through a learned subset — the "experts" — and activate only those. The benefit is large-model capability with small-model compute per inference.

The deployment challenge is that computation is sparse, but memory isn't — you still need all the experts loaded. For most practitioners, the relevant lesson is about model selection: when evaluating MoE-based models for deployment, memory requirements and expert loading behavior matter as much as raw parameter count.

Multimodal Is the New Default Expectation

The biggest architectural shift of the past year isn't any single new technique — it's the normalization of multimodality. Text-only models are increasingly specialized choices rather than defaults. Vision-language models, audio-language models, and models that process video natively are all in active production use.

If your current system treats vision and text as separate pipelines handled by separate models, it's worth asking whether that architecture is still the right choice — both for performance and operational complexity.

Training Methods: What's Actually Working

Pre-Training Is Still the Foundation

There's no shortcut to base capability. Pre-training on massive diverse datasets is still what creates the foundation that everything else builds on. The scaling laws more compute, more data, bigger models tend to perform better up to a point — still hold, though with diminishing marginal returns at the extreme frontier.

For most organizations, training a frontier model from scratch isn't the right investment. The cost is prohibitive, and the base models available are exceptionally capable. The question isn't usually "should we pre-train" — it's "which base model do we adapt, and how."

Post-Training: The Part That Actually Differentiates Models

The gap between a well-post-trained model and a poorly-post-trained one is large enough that post-training deserves equal engineering attention to model architecture. Here are the main methods in the current toolkit:

Supervised Fine-Tuning (SFT) is the standard first step: take labeled examples of the behavior you want, fine-tune on them. Reliable, well-understood, requires quality labeled data. The bottleneck is always data quality, not the technique itself.

RLHF (Reinforcement Learning from Human Feedback) trains a separate reward model from human preference comparisons, then uses RL — typically PPO — to optimize the main model against that reward. This is what made the original ChatGPT feel like ChatGPT. Still widely used; the main cost is the engineering complexity of maintaining a separate reward model and the expense of human preference labels at scale.

DPO (Direct Preference Optimization) reformulates alignment as a direct classification problem using preference pairs — no explicit reward model required. The result: fewer hyperparameters, less compute, similar or better alignment quality in many settings. It's been widely adopted because it delivers most of the RLHF benefit with significantly less overhead. Variants like KTO and ORPO address specific failure modes in the original DPO formulation.

RLVR (RL with Verifiable Rewards) is the technique generating the most research excitement right now. Instead of expensive human preference labels, you use verifiable correctness signals as the reward — does the math answer check out? does the code pass the test suite? does the factual claim verify against a database? The "verifiable" part is what makes this scalable: you can generate enormous amounts of training signal from problems with deterministic ground truth, without human labelers. This has opened up post-training scaling as a serious research direction and is actively being extended beyond math and code to any domain where verification is feasible.

Test-Time Compute: A New Dimension of the Quality-Cost Tradeoff

One of the most significant practical shifts in the past couple of years is the rise of inference-time scaling spending more compute at inference time to improve outputs, rather than training larger base models.

The core idea: give a model space to reason through a problem "thinking tokens," chain-of-thought reasoning, iterative self-correction before producing its final answer. On hard reasoning tasks, this approach dramatically improves performance. The gains on mathematical and scientific reasoning benchmarks have been striking enough to represent a qualitative capability shift, not just a marginal improvement.

The practical tradeoff this creates: a latency-vs-quality dimension that didn't exist before. Thinking through a complex problem takes time and compute. For customer support, autocomplete, or high-throughput classification, fast and good-enough is usually right. For medical analysis, code generation, financial modeling, or complex multi-step reasoning — spending more compute at inference to get substantially better answers is often worth it. Choosing between these modes is now an architectural decision.

Parameter-Efficient Fine-Tuning: You Probably Don't Need Full Fine-Tuning

For most domain adaptation tasks, you don't need to update all the model weights. LoRA (Low-Rank Adaptation) injects small trainable matrices into the transformer layers and freezes the rest — you're fine-tuning a tiny fraction of parameters while capturing most of the adaptation benefit. QLoRA extends this with quantization, enabling fine-tuning of large models on consumer hardware that couldn't fit the full-precision model.

The tooling ecosystem (Hugging Face's PEFT library, Unsloth for speed optimization) is mature and practical. LoRA/QLoRA should be your first stop before committing to full fine-tuning. The reasons to do full fine-tuning — radical domain shift, catastrophic forgetting issues, production requirements for maximum performance — are real but rarer than people assume.

The Efficiency Imperative: Smaller Models, Bigger Results

Here's something counterintuitive: some of the most practically impactful ML work right now isn't at the billion-dollar frontier. It's in the 1–8 billion parameter range.

Research has consistently shown that smaller models trained on larger, more diverse, higher-quality datasets often outperform larger models trained on narrower data. This has pushed serious work into maximizing output with fewer parameters — and the results have been striking enough that "bigger is always better" is no longer a safe assumption.

The efficiency case is concrete. Smaller models require less hardware, respond faster, cost less per inference, and can run on-device rather than requiring cloud infrastructure. For latency-sensitive applications, for use cases where data can't leave the device, and for organizations without hyperscaler budgets, this matters enormously.

Quantization is the key enabling technique. Instead of storing model weights as 32-bit or 16-bit floating point numbers, you store them at lower precision — INT8, INT4, sometimes 3-bit. You accept some precision loss in exchange for substantial memory and compute savings.

The practical numbers are worth knowing:

- 4-bit quantization typically reduces model memory by 60–70% while maintaining accuracy within 2–5% of full-precision baselines.

- Inference speed improves 2–3× versus FP16 baselines on edge hardware.

- Energy consumption drops 35–50% in INT4 configurations on constrained devices.

- Modern quantized models achieve over 99% accuracy recovery on complex reasoning benchmarks compared to their full-precision counterparts a result that would have been surprising a couple of years ago.

The bar for on-device LLM deployment has dropped substantially. Models that would have required a data center to run a couple of years ago can now run acceptably on consumer laptops and mobile hardware. If your use case allows for a domain-specific fine-tuned small model, the cost and latency advantages over cloud-hosted frontier inference are real and growing.

MLOps: The Part Everyone Still Underestimates

Let's be direct: most ML projects fail. Not because the models are bad. Because the systems around them are inadequate.

The numbers are sobering: the majority of ML projects never reach production, and of those that do, a significant portion fail to sustain business value within the first year. A systematic literature review found that over half of companies cite lack of adequate MLOps practices as a major obstacle to ML deployment. This is a solved problem in the sense that we know what to do — it's unsolved in the sense that most organizations don't do it.

MLOps — applying DevOps principles to the machine learning lifecycle — has moved from a nice-to-have to a hard requirement for any organization running ML at scale. The ROI on doing it right is substantial and well-documented.

What Actually Breaks in Production

Data drift is the silent killer. Your training data reflects the world as it was. The production environment reflects the world as it is. These diverge — sometimes suddenly (market events, regulatory changes, product updates), sometimes gradually (shifting user behavior, seasonality, changing product features). Models that looked great at deployment quietly degrade. Without monitoring, you won't know until something breaks loudly and visibly. By then, you've already lost business value.

Training-serving skew causes a surprisingly large share of production failures that look like model problems but aren't. During training, your feature pipeline runs in batch over historical data. In production, it runs in real time. Subtle differences in how features are computed timestamp handling, missing value imputation, aggregation windows produce inputs the model has never actually seen during training. Performance degrades in ways that are hard to attribute correctly. A properly configured feature store with point-in-time correct feature retrieval prevents this class of failure entirely.

Reproducibility gaps compound silently over time. When something goes wrong six months from now a regulatory audit, a production incident, an unexplained performance drop you need to know exactly what data, what code, and what hyperparameters produced the model in question. Without systematic versioning from day one, incident investigation becomes forensic archaeology. Tools like DVC for data versioning and MLflow for experiment tracking and model registry exist specifically to make this tractable. They're not overhead — they're insurance.

The teams that build monitoring and reproducibility in from the start spend less time on incident response and more time improving models. The teams that treat it as something to add later spend more time firefighting.

LLMOps: A Different Category of Problem

Traditional MLOps assumed a discrete, relatively predictable artifact: a model with a defined input schema, roughly deterministic outputs, and measurable performance metrics. LLMs break all three of those assumptions simultaneously.

Users can input anything. Outputs are non-deterministic. "Correctness" is often subjective and context-dependent. And a single LLM inference can cost orders of magnitude more than a traditional ML prediction which makes cost management, token optimization, and intelligent routing engineering priorities rather than afterthoughts.

In production, LLM systems are rarely single models. They're orchestrations of foundation models, fine-tuned adapters, retrieval pipelines, guardrail layers, routing logic, and feedback mechanisms. Each component has its own lifecycle and failure mode. Some of the uniquely LLM-specific challenges:

Prompt versioning and testing. Prompts are code. They need version control, testing, A/B experimentation, and rollback capability. A prompt that works beautifully in development can produce subtly different outputs after a base model update. If you're treating prompts as casual strings that live in a Notion document, you're running an operational risk.

Evaluation at scale. How do you know your RAG pipeline improved? How do you measure whether your fine-tuned model is actually better for your use case? Traditional metrics don't capture what matters for generative outputs. Building automated evaluation frameworks — using other models as evaluators, designing task-specific rubrics, running adversarial test suites — is genuinely hard and genuinely necessary.

Cost-aware serving. Routing easy queries to smaller, cheaper models and hard queries to frontier models is an engineering capability that can reduce inference costs dramatically without sacrificing user experience. It requires good query classification and careful latency management, but the economics make it worth building for any system at meaningful scale.

The practical tooling stack: MLflow for experiment tracking and model registry; DVC for data versioning; a feature store (Feast is the practical recommendation for small-to-mid-size teams); Evidently or WhyLabs for drift monitoring; Prometheus or Datadog for infrastructure observability; vLLM for high-throughput LLM inference serving; LangSmith or similar for LLM-specific tracing and evaluation.

The Trends Worth Paying Attention To

Agentic AI is past the demo phase. Multi-step autonomous AI systems — agents that plan, use tools, check their own work, and iterate toward a goal — are in production. The engineering challenges are real: how do you evaluate an agent? how do you debug a failure that occurred at step 7 of a 15-step workflow? how do you monitor a system that takes non-deterministic paths? These problems have active solutions, not solved ones. If you're building or evaluating agentic systems, invest in evaluation infrastructure before you invest in capability.

Multi-agent protocols are standardizing. Anthropic's MCP (Model Context Protocol), alongside other emerging standards, is establishing the scaffolding for how models communicate with tools and with each other. This is infrastructure maturation — the same kind of standardization that made microservices practical. Understanding these protocols is becoming relevant for any practitioner building systems where models need to interact with external data sources or other AI components.

Open-weight models are genuinely competitive. The gap between open-weight and proprietary frontier models has narrowed substantially. For a wide range of production tasks, fine-tuned open-weight models deliver competitive performance at dramatically lower ongoing cost, with the additional advantages of data privacy (no external API calls), deployment flexibility, and customizability. If cost or data privacy is a constraint, the open-weight case has never been stronger.

Federated learning is moving from research to production. Privacy regulations are pushing organizations toward training on decentralized data. Federated learning lets models train locally on devices or institutional servers and share only model updates — not raw data. Combined with differential privacy, this is how regulated industries are deploying ML without centralizing sensitive information. Healthcare and financial services are the leading adopters. If you work in regulated industries, understanding federated learning is increasingly practical rather than academic.

Physical AI is the next research wave. After years of diminishing returns from pure language model scaling, serious research attention is turning to AI that can sense, act, and learn in real physical environments — robotics, autonomous systems, embodied AI. This is longer-term for most practitioners, but investment signals are clear and the research community is actively working on the foundational problems. Worth tracking even if it's not immediately actionable for your current work.

Where to Actually Invest Your Time

If you're an ML practitioner figuring out where to focus in 2026, here's an honest prioritization:

Master the post-training stack. Pre-training is expensive and usually someone else's problem. Fine-tuning with LoRA/QLoRA, understanding RLHF vs DPO vs RLVR, knowing when inference-time scaling helps versus when a stronger base model is the right call — these are high-leverage skills you can apply immediately. The demand for practitioners who understand post-training deeply exceeds the supply.

Build evaluation competency. The hardest unsolved problem in applied ML isn't training — it's knowing whether your model is actually better. How do you evaluate a RAG pipeline? How do you catch when a fine-tuned model improved on your target task but regressed on adjacent tasks? How do you evaluate an agent's reasoning quality at scale? Building rigorous evaluation pipelines is underinvested relative to its impact, and it compounds: teams with good evaluation infrastructure improve faster than teams without it.

Learn MLOps infrastructure before you need it. The time to build monitoring, versioning, and reproducibility infrastructure is before production, not after the first incident. The tools are mature. The learning curve is real but manageable. The cost of skipping this is high and usually hits at the worst possible time.

Understand quantization practically. Running quantized models in production is no longer a research curiosity. Knowing the tradeoffs between precision formats, understanding which quantization approach fits which hardware target, and being able to benchmark accuracy degradation against inference gains — these are practical skills for anyone who cares about deployment economics.

Read the architecture literature broadly, not just deeply. You don't need to implement Mamba from scratch. But understanding why SSMs exist, what problem they solve, and what they trade off helps you make better architecture decisions and evaluate new work intelligently. Broad architectural literacy compounds over time in ways that narrow framework expertise doesn't.

A Note on Calibration

The capabilities available to ML practitioners in 2026 are genuinely impressive. The gap between what AI can do in benchmarks and what it does reliably in messy, real-world production has narrowed significantly. But it hasn't closed.

Agentic systems fail in non-obvious ways that are hard to catch without specific evaluation infrastructure. Multimodal models produce confident wrong answers on visual tasks. Small quantized models sometimes fall apart on specific domains that weren't well-represented in their training data. Benchmark leaderboards reward benchmark optimization in ways that don't always transfer to your actual use case.

The best ML engineers hold two things simultaneously: genuine recognition of what's now possible, and genuine rigor about testing specific systems before relying on them. That combination informed optimism, empirical testing, is what separates teams that ship reliable production ML from teams perpetually impressed by demos.

Build things. You can find more valuable researches and guides on Ai Herald.

Citations:

KernShell — "MLOps in 2026: Best Practices for Scalable ML Deployment" Covers feature stores, CI/CD for ML, monitoring, and the Gartner 2025 statistic on production sustain rates. 🔗 https://www.kernshell.com/best-practices-for-scalable-machine-learning-deployment/Published ~April 2026

Epoch AI — AI Trends Dashboard Tracks training compute growth (5×/year since 2020), inference cost halving (~every 2 months), and pre-training efficiency improvements (3×/year). 🔗 https://epoch.ai/trends Updated February 2026

[2] Epoch AI — LLM Inference Prices Have Fallen Rapidly but Unequally Cottier, B., Snodin, B., Owen, D., & Adamczewski, T. (2025). Detailed analysis of inference price trends across benchmarks — found 9×–900× annual price drops depending on task. 🔗 https://epoch.ai/data-insights/llm-inference-price-trends Published March 12, 2025